You Win the AI Game When You Start with the Boring Part

Most AI pilots in management contexts fail not because the tech doesn't work, but because the scope is too big to survive its own first mistake. The fix is restraint.

Most leaders fail their AI pilot the moment they choose the workflow.

Years ago, before any of this AI noise, I had a senior DevOps engineer on my team who wanted to use Kafka for a queue. The job was small. SQS would have done it, easily. But Kafka was the answer he'd already arrived at, and he was the kind of senior engineer whose judgement you don't dismiss lightly.

I told him to do both. Start with SQS. If it breaks, we'll move to Kafka.

SQS worked out of the box and stayed out of the way. Kafka needed constant tinkering for a use case it was overbuilt for. We never moved. Kafka had nothing to do with it. The problem was reaching for the bigger system before we'd earned the right to.

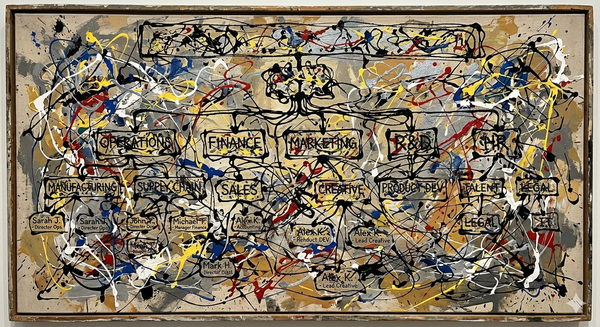

AI mandates put managers in the same position, except the marketing is louder and the slide deck is fancier. The vendor pitch is end-to-end: ticket comes in, AI triages, AI drafts the response, AI escalates the upset customers, AI updates the knowledge base from the resolution, AI summarises the week. Six things, one rollout, one quarterly OKR. The leader signs the contract. Three weeks in, one piece misbehaves, the whole thing gets pulled, the team is now more sceptical of AI than they were before, and the CEO is asking pointed questions about ROI.

The scope is what breaks. The technology rarely gets a chance to.

The pilot that doesn't fail starts narrow

The instinct, when you get an AI mandate, is to pick a workflow and automate the workflow. Customer support. Procurement. Onboarding. Whatever process is currently painful and observable. Let me tell you why that's the wrong unit.

A workflow has six or eight or twelve steps, each with their own edge cases, each owned by someone with their own stake. When you automate the workflow, you're rolling out a system that has to clear all of those bars at once. The probability that one of them fails is high. The cost of one failure is the credibility of the whole thing.

You don't actually know what AI is good at in your specific context yet. You know what the demo showed you. The demo was a curated environment with clean data, clean prompts, and a salesperson steering. Your environment is messier in ways the demo can't account for, and the only way to learn what AI handles well in your mess is to put it in front of one small piece of that mess and watch.

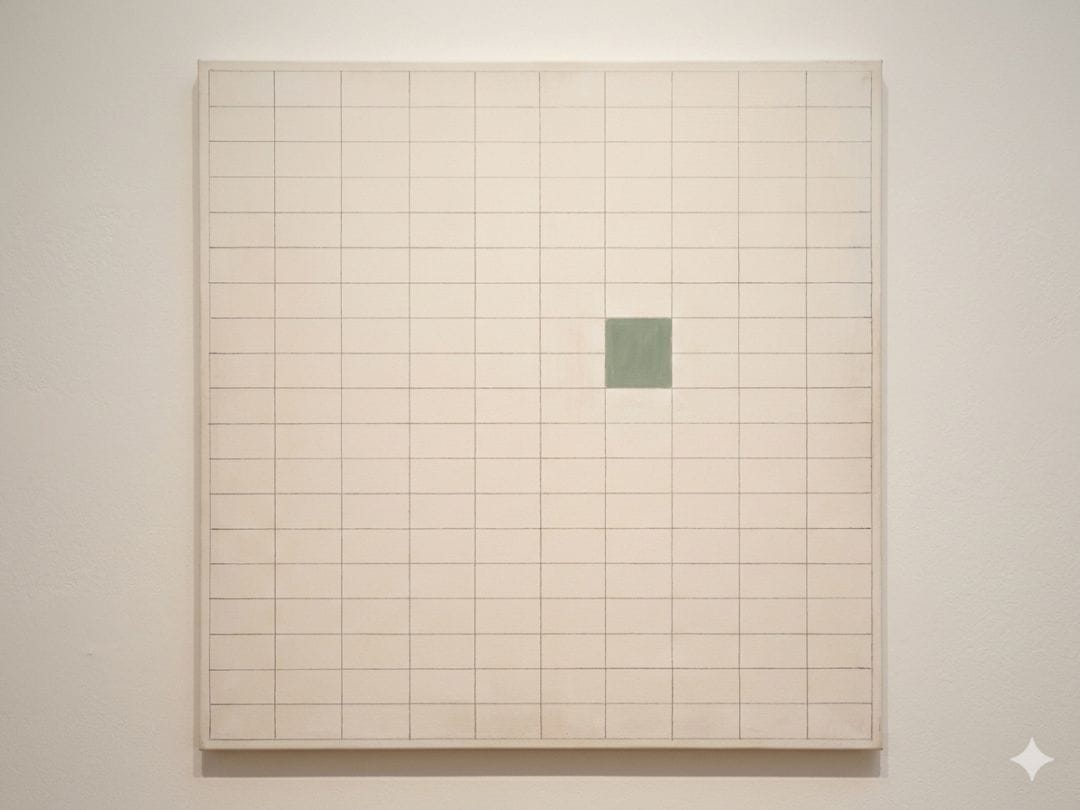

So pick the smallest, most boring, lowest-stakes tiny bit of a process and put AI there. Not because you're being humble. Because you're learning what the tool actually does in your hands.

What "boring" actually looks like

In a support team, the boring part is the first draft of the response, dropped into a field the human agent edits before sending. The agent is still on the hook. The customer still talks to a person. But the agent starts each ticket from 60% done instead of from a blank reply. After a month, you know whether the drafts are useful, where they fail, which ticket types they handle well. You've spent zero credibility, because the agent was always going to be the safety net. And you've learned more about AI in your context than any pilot deck would have told you.

In a leadership team, the boring part is turning the meeting into action items. Record, transcribe, summarise, list owners and dates. Drop it in the Slack channel within ten minutes of the meeting ending. That's it. No AI in the room, no AI making decisions, no AI talking to anyone who matters. Just the most thankless five-minute task on the manager's plate, done before the manager has reopened her laptop. After two months you'll know whether your team trusts those summaries, which is itself one of the most useful pieces of information you can have about whether AI fits how you actually work.

Both of these are boring on purpose. The boredom is the feature.

The discipline is restraint

If you choose the small thing first, you give yourself room to be wrong. The first-draft tool turns out to be useless? You turn it off, the agents go back to writing from scratch, nobody's job changed. The meeting summariser hallucinates a deadline? You catch it the first time, calibrate, or kill it. There's no rollback meeting. There's no "we tried AI and it didn't work" narrative. There's just one small boring automation that did or didn't earn its place.

Most managers I talk to skip this and reach straight for the workflow because the workflow is what the C-suite wants to hear about. "We're rolling out AI in customer support" sounds like a story. "We're auto-drafting the first sentence of replies" sounds like a random Tuesday. Yet, the Tuesday version is the one that works.

You can build the workflow. Eventually. After you've shipped the first sentence, the meeting summary, the weekly digest, the documentation pull, and watched each of them long enough to know which ones your team actually relies on. The workflow is what a year of small wins eventually compounds into. Trying to ship it as a quarterly plan is how you end up with a pulled rollout and a sceptical team.

You win the AI game when you start with the boring part. The exciting part shows up on its own, later, made of all the boring parts you got right. The boring parts compound.